Enabling AVX Support in QEMU for Ollama Inference

Recently, I was configuring the self-hosted and open source chatGPT alternative Ollama Web UI on a Proxmox VM. However, when the Ollama backend attempted to run the inference job, the runner process terminated. The exact error was:

Error: llama runner process has terminatedThe logs expanded on this with

llama runner stopped with error: signal: illegal instruction (core dumped)It turns out that Ollama requires a CPU with the AVX (Advanced Vector Extensions) instruction set, which improves performance for floating-point number calculations. In my case, the CPU actually supported AVX, but because I hosted Ollama in a Proxmox virtual machine, the CPU was virtualized as “QEMU Virtual CPU”. At the time writing, QEMU doesn’t seem to support emulating the AVX instruction set.

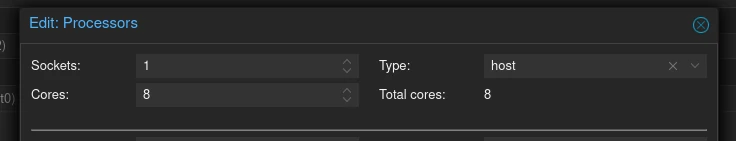

To fix this, I changed the processor type in the VM hardware options from a QEMU-variant to ‘host’, as my host CPU supports AVX. After a reboot, the issue was resolved.

Future updates might include inference without AVX support. Follow this issue on Github for updates.

10/3/2023